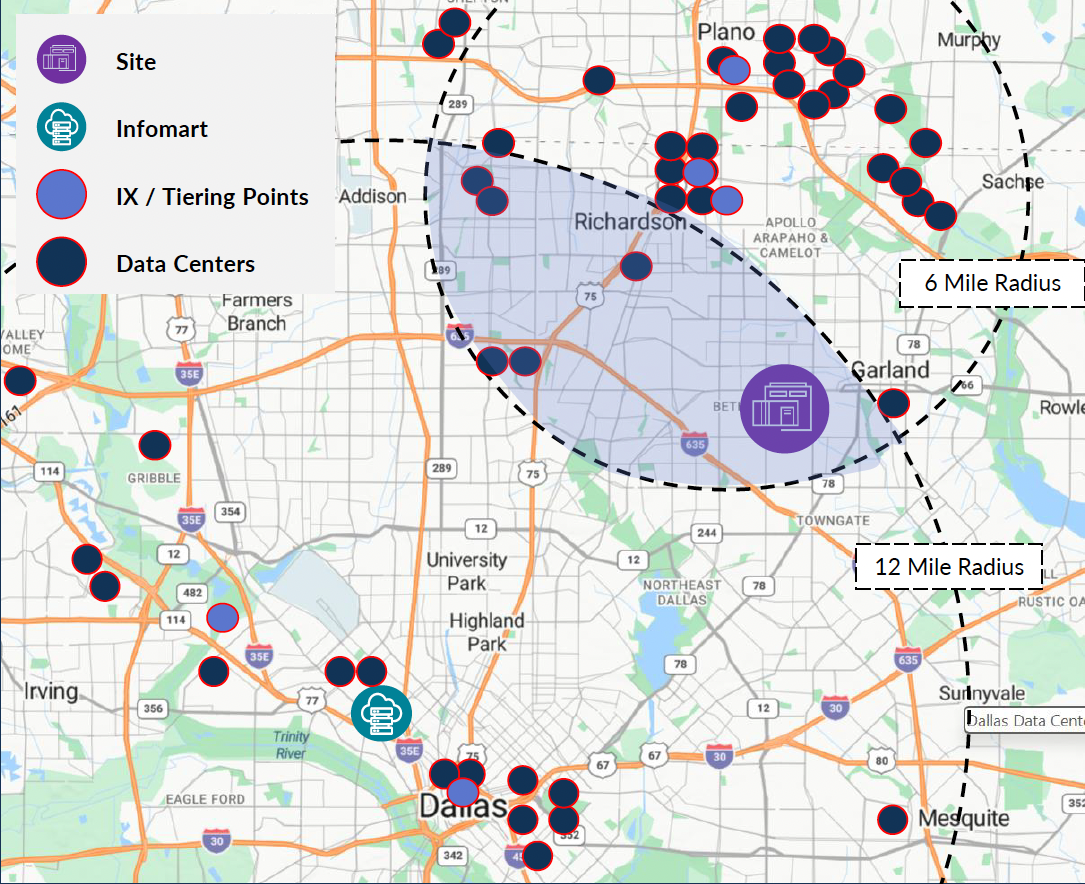

54MW of purpose-built AI inference capacity in the premium North Dallas Corridor — one of the fastest-growing AI data center markets in the United States. This AI inference data center in Dallas is sited for fiber density, proximity to population centers, and fast utility energization on the ERCOT grid.

Request Capacity

AI inference workloads are distributed, latency-sensitive, and scale continuously with user adoption. Inferent™ facilities are purpose-built AI inference data centers optimized for these dynamics — right-sized at 30–60MW, sited in high-connectivity markets like the North Dallas AI data center corridor, and designed for delivery in months. Available for AI data center wholesale lease and AI inference build to suit.

54MW of purpose-built AI inference capacity in one of the fastest-growing DFW AI data center markets in the United States. This North Dallas AI data center is sited for fiber density, proximity to population centers, and fast utility energization on the ERCOT grid.

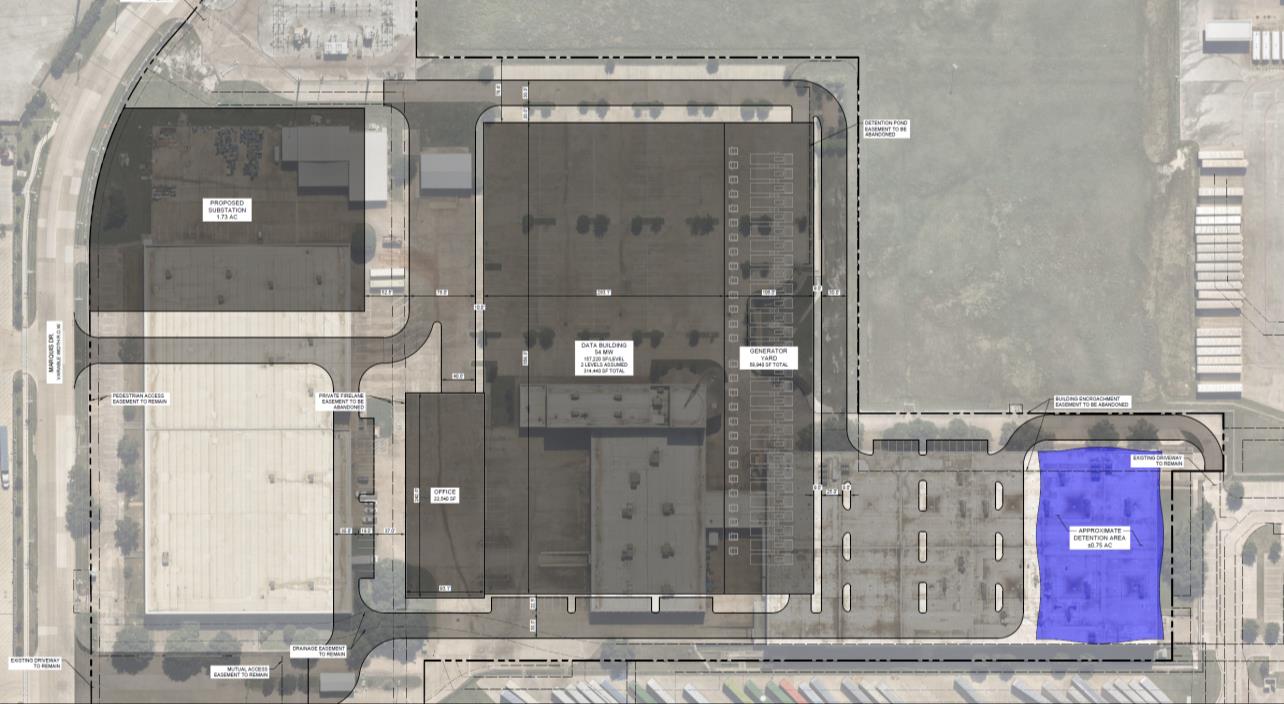

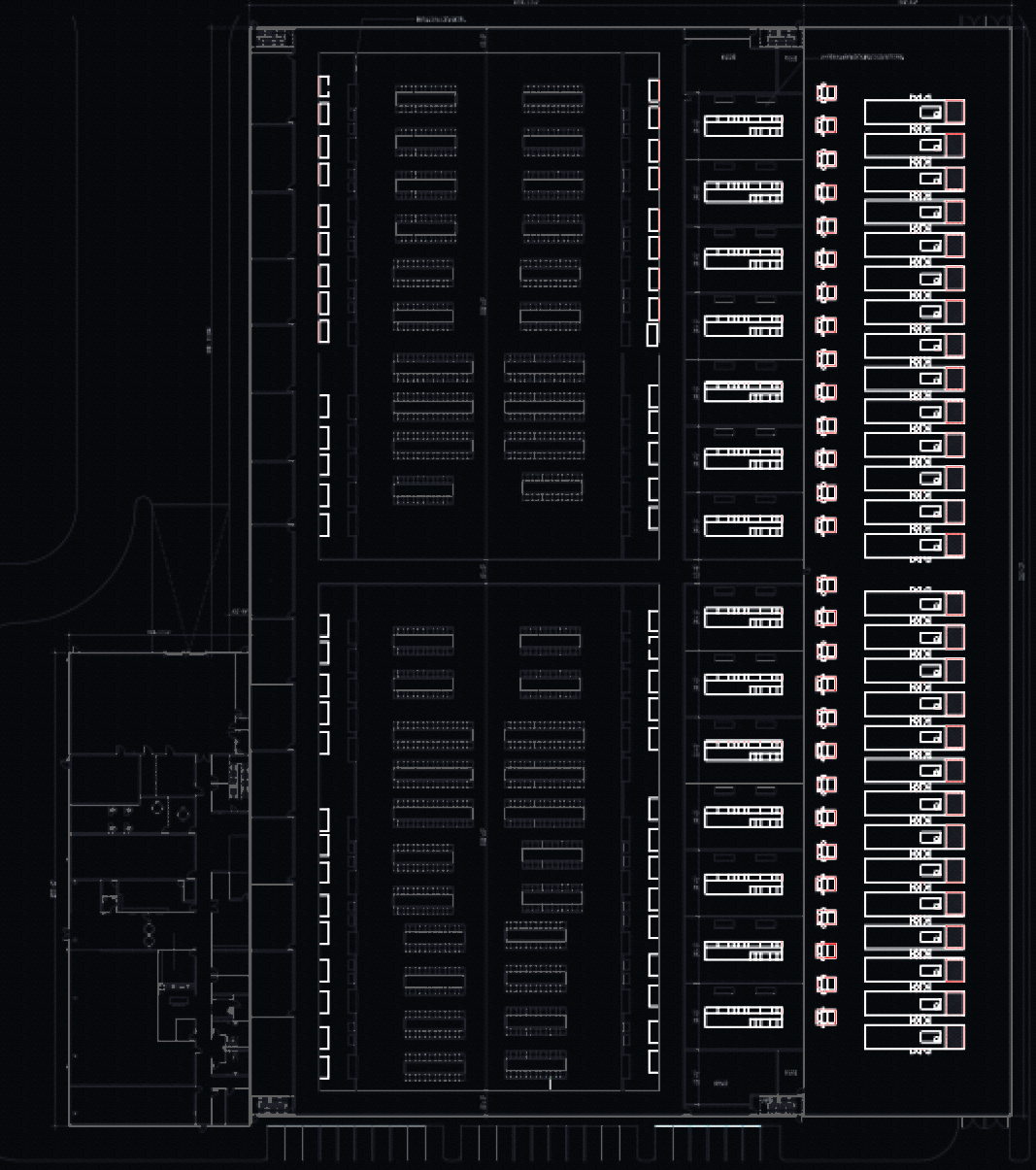

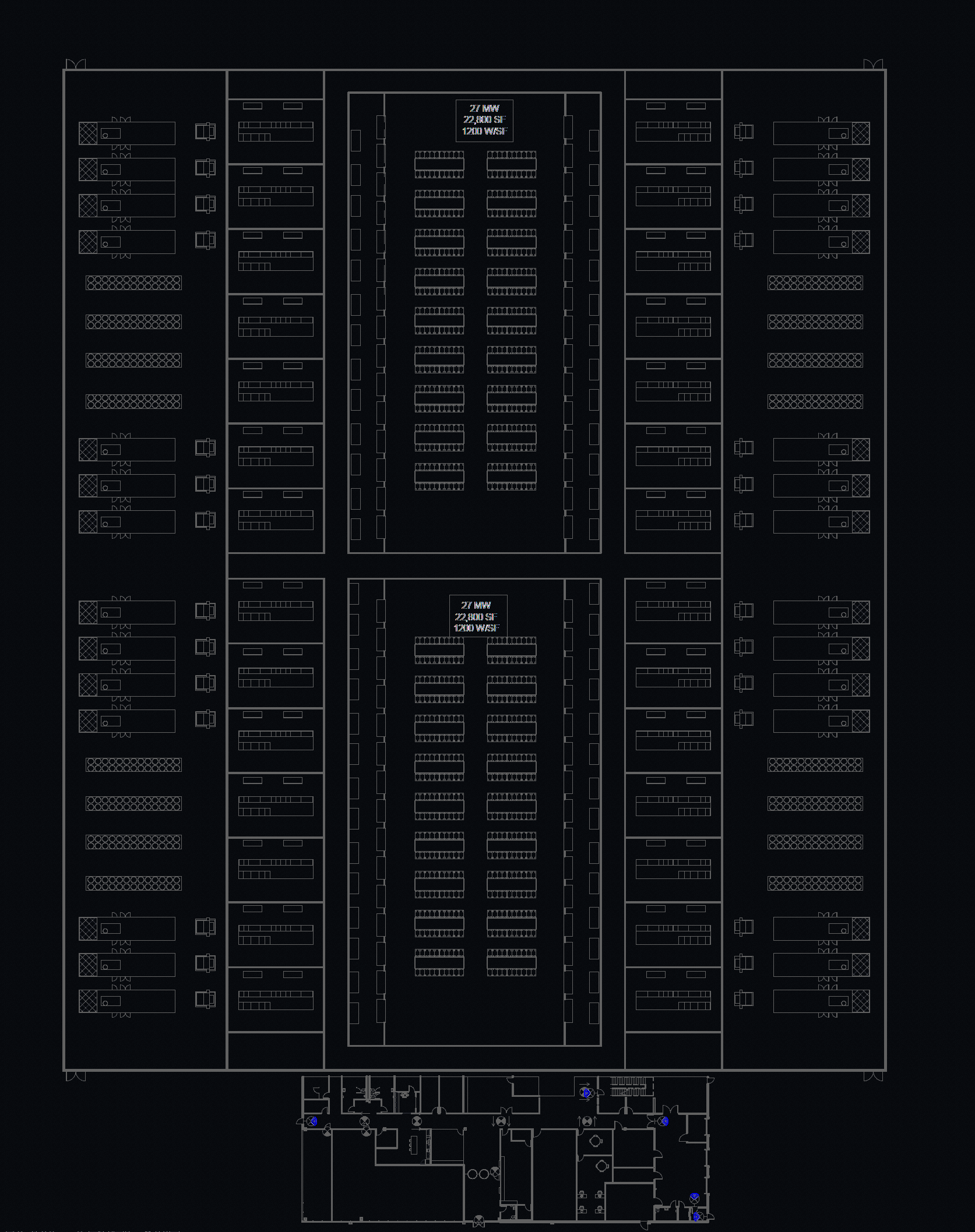

NDC-1's basis of design supports 54MW of critical IT load across configurable data halls — optimized for the power density, liquid cooling, and rack-level power delivery that next-generation AI inference platforms demand. This AI inference data center in Dallas is designed to support wholesale lease deployments and AI inference build to suit configurations. Here's how the facility maps to NVIDIA's latest inference platform.

* Estimated for a frontier-class LLM (~200B+ params) at FP4, ~40% utilization, ~500 GFLOPS/token, ~40 tok/s per stream.

Facility is designed for extreme configuration flexibility. Hall sizes, power density, and cooling topology (air-cooled or direct liquid cooling) can be tailored to tenant requirements. The 7/6 distributed redundant electrical system uses 2.25MW power blocks and can be upgraded to block redundant with static transfer switches. Mechanical system uses adiabatic air-cooled magnetic bearing chillers (~50 GPM average water use); high-temp and low-temp DLC loops available at tenant option.

NDC-1's advanced primary cooling system — YORK® YVAM magnetic bearing chillers — uses no water at all. Cutting-edge adiabatic trim cooling supplements on the hottest days, averaging about 50 GPM across the year — roughly equivalent to a restaurant, hotel, or car wash.

The primary cooling system uses no water at all. Adiabatic pre-cooling activates only when ambient temperatures rise — roughly 60 days a year in the Dallas climate — using a fraction of what conventional cooling-tower designs require. Direct liquid cooling loops (high-temp and low-temp) available at tenant option for next-generation GPU platforms.

Provident Data Centers is a vertically integrated data center developer headquartered in Dallas, TX. The Provident team brings 85 years of focused data center and energy infrastructure experience. Provident has developed over 5+ GW of powered land, with a robust pipeline of 70+ buildings. Our team members delivered over 540MW of high performance data center capacity across tier 1 and tier 2 U.S. markets for hyperscale, enterprise, and colocation tenants.

NDC-1 is in active development for delivery in 2027. Whether you need an AI data center wholesale lease or an AI inference build to suit, tell us your deployment size, timeline, and workload profile — we'll respond within one business day with a capacity proposal.

Additional markets in development. NDC-1 is the first Inferent™ facility — not the last. We are actively evaluating sites across multiple U.S. markets with strong connectivity and available power.

Pipeline ActiveAn AI inference data center is a facility purpose-built to run trained AI models in production — serving real-time predictions, generating text, processing images, and powering AI-driven applications at scale. Unlike training facilities that focus on building models, inference data centers are optimized for low latency, high availability, and continuous throughput close to end users.

Inferent™ facilities are designed from the ground up for AI inference workloads. That means high-density rack power (200kW+), direct liquid cooling as a standard option, network-first site selection for fiber density and low latency, and flexible hall configurations that scale with tenant demand. Traditional data centers retrofit these capabilities; Inferent™ builds them in from day one.

The DFW metroplex is one of the fastest-growing AI data center markets in the United States. The North Dallas Corridor offers dense fiber connectivity (AT&T, FiberLight, LOGIX, Segra, Zayo), proximity to the Infomart carrier hotel, fast utility energization on the deregulated ERCOT grid, and competitive power costs. Dallas absorbed over 470MW of data center capacity in H2 2025 alone.

NDC-1 offers 54MW of critical IT capacity available for AI data center wholesale lease, with configurable data halls that can be tailored to tenant requirements. Build-to-suit options are also available for organizations that need custom power density, cooling topology, or hall configurations for their specific AI inference deployment.

NDC-1's basis of design supports next-generation GPU platforms including the NVIDIA Vera Rubin NVL72, with 200kW per rack, direct liquid cooling (high-temp and low-temp loops), and a 7/6 distributed redundant electrical system upgradeable to block redundant. At full buildout, the facility can house 270 NVL72 racks delivering approximately 1 zettaFLOP of FP4 inference compute.

A Provident Data Centers company

A Provident Data Centers company